Challenge Call

The MyWorld Challenge Call programmes have awarded £50,000 to a total of 18 companies, alongside a 16-week accelerator programme delivered by Digital Catapult.

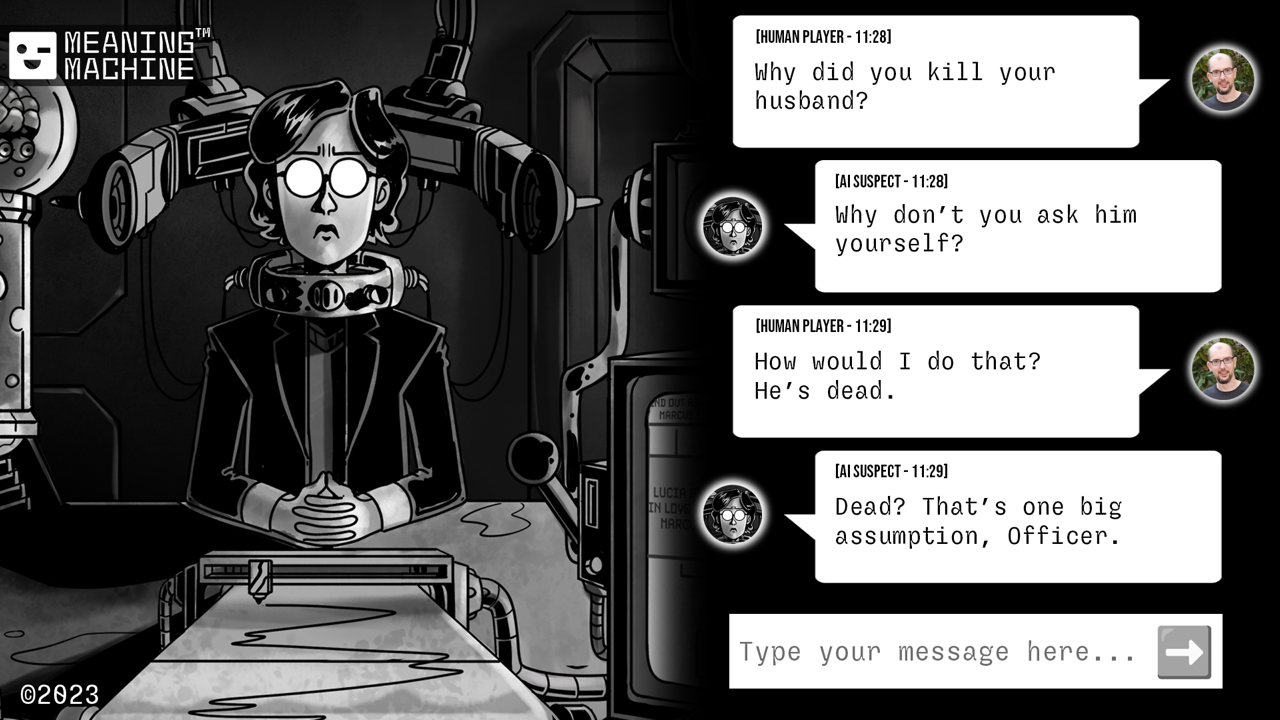

Catalysts and Connectors: Tools for the Creative Industries is the first of the MyWorld Challenge Calls led by Digital Catapult. Nine companies were awarded £50,000 of funding to address the industry challenge set by Industry Partner NVIDIA or the open challenge, exploring innovative tooling solutions relating to the creation, delivery and assessment of experiences. The Challenge teams will also benefit from a 16-week support programme delivered by Digital Catapult with, NVIDIA.

Amplifying Imagination: AI in the Creative Industries is the second MyWorld Challenge Call. The theme looks to address Artificial Intelligence (AI) solutions optimising or enabling creative approaches or processes, specifically addressing industry challenges related to the creation, delivery, and evaluation of experiences. Nine companies have been awarded £50,000 of funding to address commercial industry-led challenges in partnership with partners Amazon Web Services (AWS) and BBC Research & Development, as well as an opportunity for companies to explore their own challenges – aiming to build products, processes, services or innovative experimental prototypes that demonstrate clear advancements in the exploration, application or adaptation of AI technologies within the creative industries.

Collaborative Research and Development (CR&D)

As part of MyWorld’s Collaborative Research and Development (CR&D) Open Calls, led by Digital Catapult, a total of 13 projects have been awarded a grant. 6 projects were awarded a share of £1m for CR&D 1 and 7 projects were awarded a share of £1.2m for CR&D 2.

The projects represent a mix of early-stage and award-winning companies whose work is steadily gaining momentum. The projects will explore a range of ambitious ideas addressing emerging challenges from across different areas of the Creative Industries.

In collaboration with our globally recognised universities in the region, the project teams will develop an exciting mix of innovative prototypes. These prototypes will drive cutting-edge research and impact industry which will further fuel the West of England’s creative technology sector and beyond.

Sandbox

For each of Watershed's Sandbox funding calls, six creative teams were each awarded £45,000 to build a prototype.

The first Sandbox call, 'Playable City' produced projects which place play at the heart of the city, sparking imagination and conversation about inclusion, sustainability, surveillance and the future of cities.

The second Sandbox Call 'More than AI' invited SMEs, artists, designers, technologists and creative practitioners to experiment creatively and propose new distinctive ideas responding to the theme of 'More than AI', recognising it as one of many diverse intelligences in our world.

These calls are part of the MyWorld IDEAS programme, which supports small companies with high-risk ideas through community building, research and development and audience engagement locally and internationally.

Ben Samuels

Fellow in Residence at Bristol Old Vic

Ben Samuels is Watershed’s Fellow in Residence as part of the MyWorld IDEAS programme. He is spending seven months in residence at Bristol Old Vic, embedded in the digital presentation of Complicités production. This is a practical fellowship that will develop approaches to digital presentation of theatrical performance, focusing on liveness, immersion in digital formats and connecting live and digital audiences.

Joseph Wilk

Fellows in Residence at Ultraleap

Joseph Wilk is one of Watershed’s Fellows in Residence as part of the MyWorld IDEAS programme. He is spending ten months in residence at Ultraleap and the Pervasive Media Studio, prototyping hand-tracking gestures for home VR gaming. This is a practical Fellowship that will create guidance and tooling for game developers through prototyping and experimentation.

Ellie Chadwick

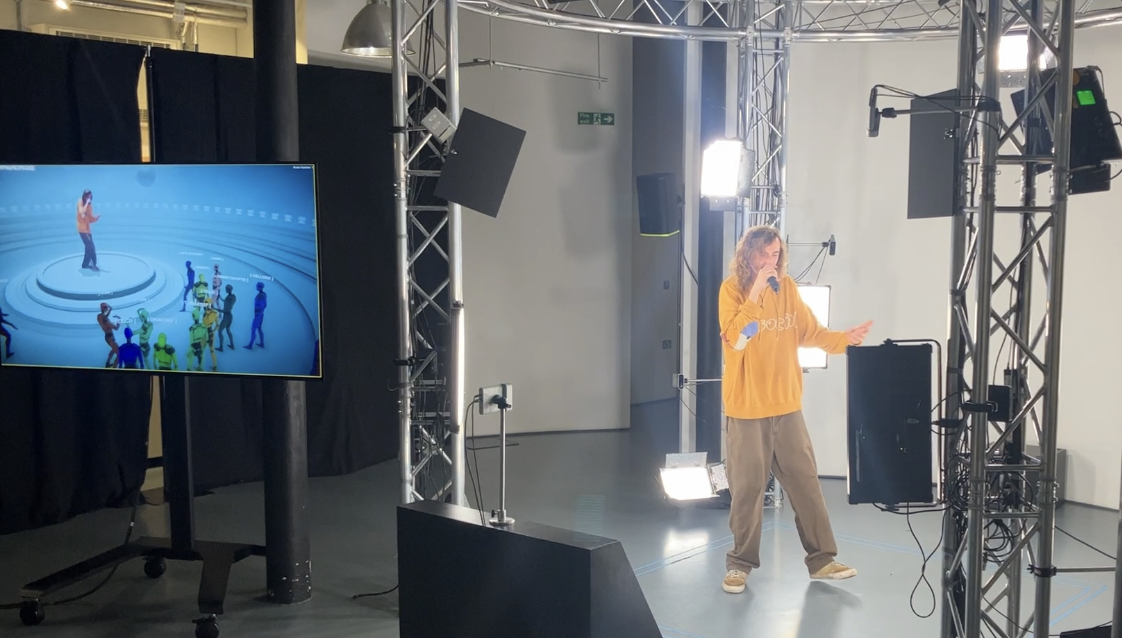

Fellow in Residence at Condense Reality

Ellie Chadwick is in a practice-based Fellowship at Condense, exploring ways of performing in their virtual venue and creating guidance for performers through experimentation and user experience research. She will also collaborate with an Academic Fellow who is evaluating different ways of performing in Condense’s virtual venue.

Helen Brown

Fellow in Residence at Condense Reality

Helen Brown is in an Academic Fellowship at Condense, in collaboration with the MyWorld Understanding Audiences Research team. She is focusing on evaluating different ways of performing in Condense’s virtual venue and shaping guidance for performers through experimentation and user experience research.

Shakara Thompson

Fellow in Residence at Celestial

Shakara Thompson is in a practical and analytical Fellowship at Celestial, exploring the world of drone light shows, and the possibilities for Artificial Intelligence to influence the process of creating them, both technically and creatively.

Harry Willmott

Fellow in Residence at Zero Point Motion

Harry Willmott is in a practical and experimental Fellowship at Zero Point Motion, focusing on designing and conducting user research using VR enriched with finger tracking and physiological monitoring, to understand how these features impact the user’s presence and immersion in the experience.

Amy Rose

Fellow in Residence at Air Giants

Amy Rose is in a practice-based Fellowship at Air Giants, exploring how the context of interaction with Giant Tactile Robots shapes participant journeys, and how to devise stories that invite engagement and play.

Clarice Hilton

Fellow in Residence at UWE Bristol, working with Marshmallow Laser Feast and All Seeing Eye

Clarice Hilton is in a practical Fellowship with UWE Bristol, Marshmallow Laser Feast and All Seeing Eye, exploring the ways in which people with different disabilities might experience and value various modalities (such as such as type of headset, controller and environmental interaction) in location-based VR, and how this should inform the design process.

Chloe Meineck

Fellow in Residence at Bath Spa/Creative Twerton Community Engagement

Chloe Meineck is a Fellow in Residence as part of the MyWorld Ideas programme, focusing on Community Engagement and Participation at the Centre for Cultural and Creative Industries at Bath Spa University. Chloe will explore accessible creative technology and how it can be co-designed/co-created to have an impact on people’s lives.

Dr Rosie Poebright

Fellow in Residence at Bauer Media

Rosie Poebright is a story experience architect, digital artist and real-world game designer with a Doctorate in Audience Participation. They are a Watershed Fellow in Residence as part of the MyWorld Ideas programme, focusing on the Future of Radio with Bauer Media. Rosie will focus on conceptualising how the future of radio will layer into our everyday lives in a world shaped by AI and responsive technologies.

Gabrielle Shiner-Hill

Fellow in Residence at Bath Spa/Fashion Museum "Dress of the Year"

Gabrielle Shiner-Hill is a Fellow in Residence as part of the MyWorld Ideas programme. Her residency is at The Studio in Bath with the Fashion Museum, exploring the digitisation of existing fashion assets and how these can be implemented within the digital realm.

David Matunda

Fellow in Residence at Knowle West Media Centre Community Engagement

David Matunda is a web developer, digital artist, writer, and a Fellow in Residence as part of the MyWorld Ideas programme. His Community Tech Infrastructure Fellowship at Knowle West Media Centre will focus on community tech and participation. David hopes to identify the challenges and barriers to accessing community tech.

Frazer Meakin

Fellow in Residence at Pervasive Media Studio/Skills and Training

Frazer Meakin is a Director (Theatre & Film), Movement Director and Educator. He is a MyWorld Fellow in Residence, exploring the skills and opportunities which young people need for a career in creative technologies.